Operationalising Responsible AI

Led design activities to interrogate the ethical risks of AI systems and translate Australia's AI Ethics Principles into practical, actionable guidance for science teams to apply throughout the development lifecycle.

Overview

Responsible AI principles are widely endorsed but rarely operationalised. This project tackled the gap between high-level ethical commitments and the day-to-day decisions that science teams make when designing, building, and deploying AI systems at CSIRO.

As design lead, I facilitated cross-functional risk assessment activities, synthesised findings into actionable design recommendations, and developed frameworks that embedded responsible AI considerations into the scientific discovery process rather than treating them as an afterthought.

The Challenge

CSIRO had committed to Australia's AI Ethics Principles—fairness, transparency, accountability, privacy, and human oversight—but the principles were abstract. Science teams building AI-powered research tools had no clear way to translate them into specific design decisions, technical requirements, or evaluation criteria.

AI systems for scientific research operate in contexts where errors can propagate through published literature, where bias in training data can systematically disadvantage certain research directions, and where the complexity of multi-agent architectures makes it difficult to trace how a system arrived at a particular recommendation.

Approach

I designed a series of structured workshops that brought together product designers, researchers, engineers, data scientists, and domain experts to systematically interrogate the ethical risks of specific AI systems.

I then design a series of POCs to test the feasibility of the approach.

Solution

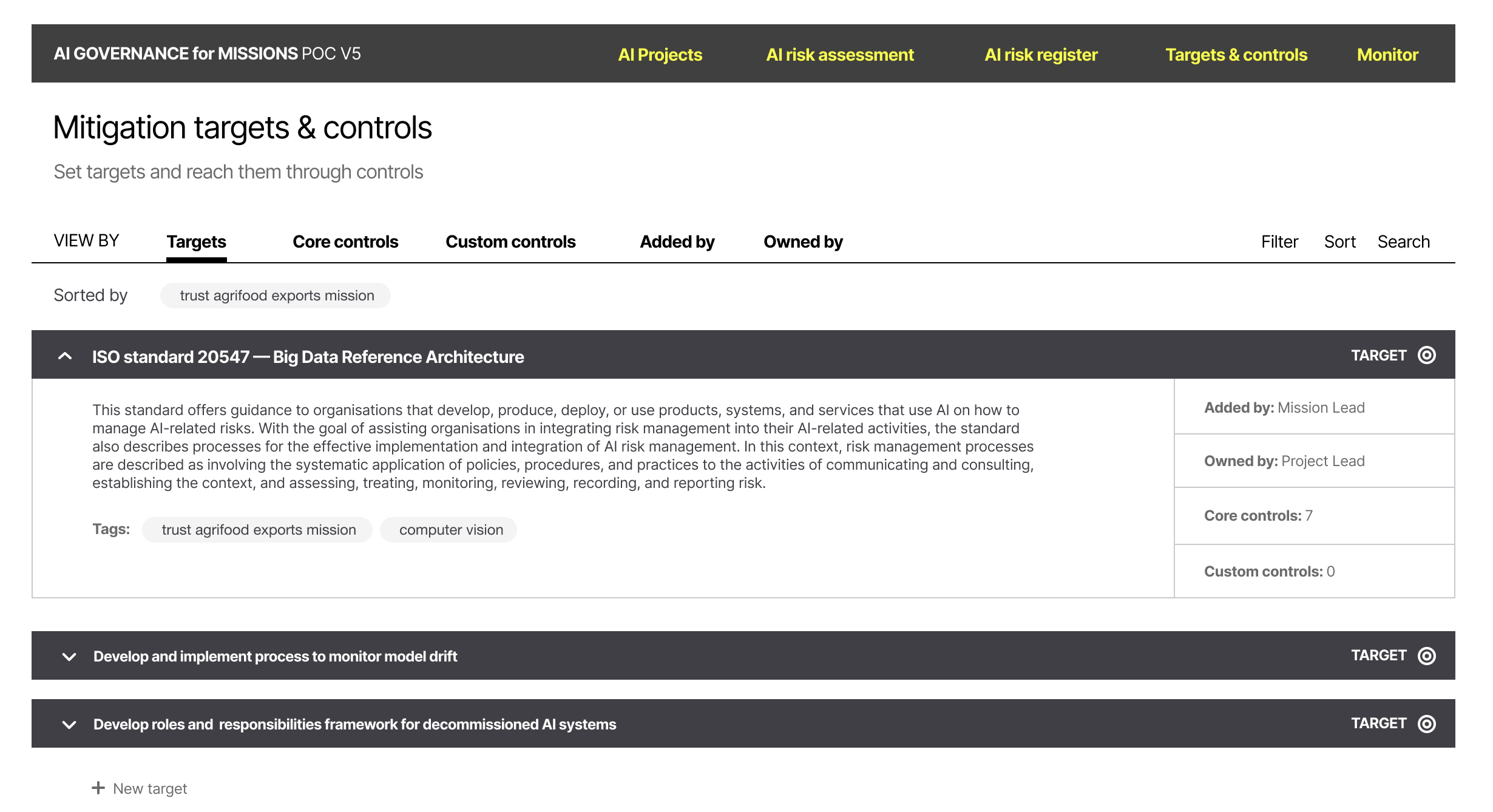

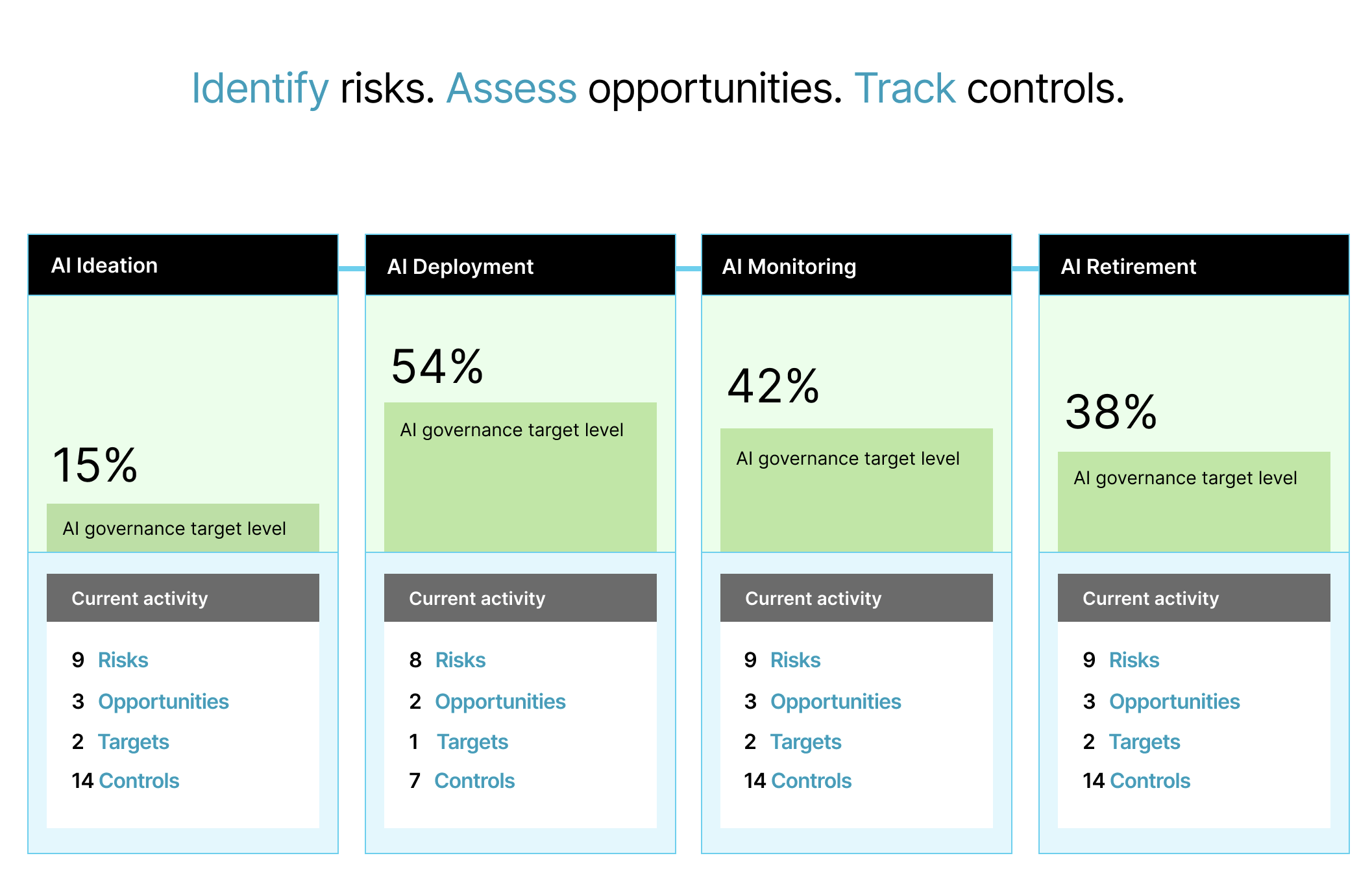

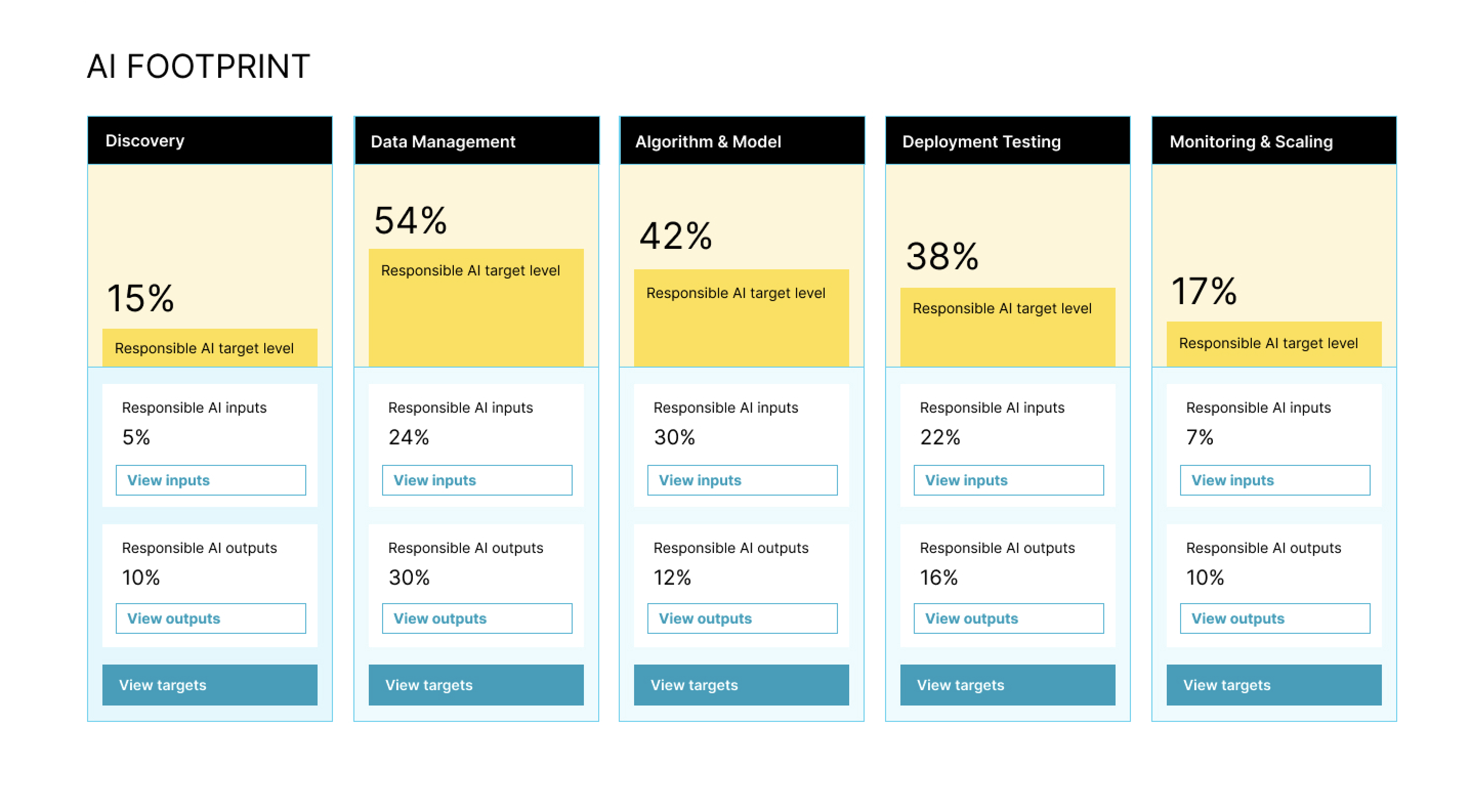

• Designed proof of concept to integrate a CSIRO ethical-AI risk assessment framework. The POC helped expand the project's problem/solution space beyond a 'risk' lens, to include other forms of governance.

• Designed a SaaS product demo that responded to goals identified in the CSIRO Responsible AI Theory of Change process.

• Created design artifacts that mapped relational trust flows between human actors during the use of Responsible AI methods.

• Empirical study aimed to understand how to capture and manage ethical risks associated with the use of AI in science.